Fog computing helps bring data and processing closer to where it’s needed, bridging the gap between the cloud and end devices. This technology reduces latency and improves efficiency, making it ideal for the Internet of Things (IoT) where real-time processing is critical. By processing data locally, fog computing enables faster responses and better management of resources.

Many industries already benefit from fog computing, including healthcare, manufacturing, and transportation. It supports a wide range of applications such as smart grids, autonomous vehicles, and real-time analytics.

Understanding fog computing can help organizations increase performance while saving bandwidth and improving security. Its decentralized approach reduces the dependence on cloud infrastructure and enhances the reliability of data processing.

Image Credit: https://www.flickr.com/photos/77744839@N00/10555582084

Key Takeaways

- Fog computing reduces latency and improves efficiency.

- It supports real-time processing for IoT applications.

- It offers better resource management and security.

Fundamentals of Fog Computing

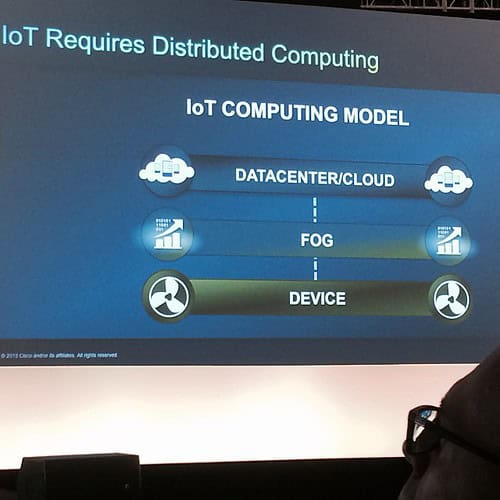

Fog computing extends cloud capabilities to the network’s edge, using both edge devices and fog nodes. It provides localized computing, storage, and networking services.

Key Concepts and Definitions

Fog computing brings data processing closer to IoT devices and users. It uses both edge devices and larger fog nodes, like small base stations, to create a “mini cloud.” This helps process data quicker at the edge rather than the central cloud.

Fog computing handles large amounts of data locally. This setup reduces latency and improves efficiency. Key elements include sensors, routers, and other edge devices that connect to fog nodes for better service delivery.

Fog Computing Architecture

The architecture of fog computing involves multiple layers. Edge devices collect data and send it to fog nodes, which provide processing and storage.

A typical fog node can be a router, switch, or micro data center. These nodes handle data processing at the edge, reducing the load on central cloud servers. This layered approach enables quicker response times and better bandwidth management.

Fog computing architecture balances computing power between local devices and centralized data centers. It ensures that intensive tasks are handled locally, while still interacting with cloud resources for broader data needs.

Fog vs Cloud vs Edge Computing

Fog computing, cloud computing, and edge computing are related but have key differences.

Cloud computing relies on centralized data centers to process and store data. Services are delivered over the internet, which can introduce latency.

Edge computing focuses on processing data directly on IoT devices themselves. It is useful for real-time data processing but can be limited by the device’s hardware.

Fog computing bridges these two approaches. It processes data at intermediate fog nodes, closer to where the data is generated. This reduces latency compared to cloud computing while being more powerful than edge computing alone. This combination provides a balanced solution for various applications.

For more detailed information, you can refer to resources like ScienceDirect and GeeksForGeeks.

Implementation and Applications

Fog computing, with its ability to process data near the edge, offers numerous advantages like low latency, enhanced security, and improved efficiency. Below, we explore some practical implementations and discuss current challenges and advancements in this technology.

Real-World Use Cases

Smart Cities utilize fog computing to manage traffic, reduce energy consumption, and monitor public safety. For instance, traffic systems can process data in real-time to optimize signal timings and reduce congestion.

Smart Healthcare leverages fog computing for real-time patient monitoring and data analytics. Integrating IoT devices with fog computing systems allows for immediate response and enhances the quality of service (QoS) in remote care.

Industrial IoT benefits significantly from fog computing. Factories use it for predictive maintenance, reducing downtime by analyzing machinery data locally rather than sending it to the cloud.

Challenges and Advancements

Security and Privacy Issues remain a critical challenge. Fog nodes are more vulnerable to attacks due to their distributed nature. Ensuring data security and privacy in such environments requires robust protocols.

Scalability is another vital concern. Efficiently managing growing numbers of connected devices without compromising performance is crucial. Advancements in scalable architectures are focusing on optimizing resource allocation.

Performance and Latency improvements are continually sought. Reduced latency is essential for applications like autonomous vehicles and smart healthcare. Ongoing research is directed at enhancing fog computing architectures to achieve seamless connectivity and rapid data processing.

Implementing fog computing demands addressing these challenges while leveraging its potential to improve various applications from smart cities to healthcare.

Frequently Asked Questions

Fog computing brings computing closer to connected devices. This section addresses common queries about its differences from cloud computing and how it benefits IoT.

What are the primary differences between fog computing and cloud computing?

Fog computing extends cloud capabilities to the network edge. It processes data closer to the source rather than relying solely on remote servers. This reduces latency and supports real-time applications.

How does fog computing architecture enhance Internet of Things (IoT) deployments?

Fog computing reduces the data transmission load to central clouds. It processes data locally, making IoT deployments more responsive. This localized processing helps in situations where quick decision-making is crucial.

Can you provide examples of real-world applications that utilize fog computing?

Smart cities use fog computing for traffic management by processing data from sensors on-site. Manufacturing plants employ it for real-time monitoring of equipment to prevent downtime. Additionally, healthcare uses fog to monitor patient data and provide timely alerts.

What are the main advantages of implementing fog computing in a network infrastructure?

Fog computing reduces latency by processing data locally. It minimizes bandwidth usage by filtering data before sending it to the cloud. This local processing also enhances security by keeping sensitive data closer to its source.

How does fog computing integrate with existing edge computing paradigms?

Fog computing can be seen as an extension of edge computing. It connects edge devices and cloud, processing data at various points within the network. This hierarchical arrangement ensures efficient data handling and resource allocation.

What technologies form the core of fog computing solutions?

Fog computing solutions use various technologies including edge routers, gateways, and local servers. These devices collaborate to process, store, and analyze data close to where it is generated. Integrating software that supports distributed computing and virtualization is also essential.